My mom sends me animal videos on Instagram. Lately they’ve been… too cute. So I check the comments. The top one is often just “AI”. Ah. Too Cute to be True. We’re entering into a world where seeing is no longer believing.

We’ve spent centuries learning to distrust what we read. We have not yet learned to distrust what we see, and AI is exploiting that gap.

We’ve had words for this for a while: Propaganda, disinformation, misinformation. “Fake News” became the popular term for this during the 2016 Election. The exposing of Cambridge Analytica’s role was the first loudly rung warning bell that exposed the extent to which ‘fake news’ could be weaponized by the precision of targeted ads.

The underlying issue here is falsehood. Fake news a decade ago was a nuisance, some bad weather. Twitter invented an umbrella, Community Notes, to identify and fight against falsehood. But now that it’s cheap, scalable, and convincing, we have a Category 5 Blizzard headed towards us. So what are we supposed to do about it?

Silicon Spirit Animals

The Blizzard is Coming. There’s no stopping it, only defending ourselves from it.

Cool tech like the Leica M11-P camera that cryptographically signs every image is directionally correct, but you can’t control what others do, only what you can do to defend yourself.

The only way to defend against the onslaught of AI, is with AI. An AI that you can trust. Every piece of digital media*, before it makes it to your eyes or ears, should be filtered or annotated by an AI of your own choosing. The media can be analyzed for many things. Chiefly, its truthfulness.

Humans have evolved to judge the truthfulness of other humans. We are not evolved to judge the truthfulness of AI-generated content. Only AI can be built to judge (and research) at this scale. We must each learn to trust our own AI, our own unique silicon spirit animal, to be our partner, an extension of ourselves, when we’re spending time in the digital world.

Trust

“Trust” has become a common topic in AI. but in Crypto, trust, or rather trustlessness, is the topic. An important tenant of Crypto is to remove the need to trust any third party. Independent verifiability and incentive structures build a foundation to stand on without the need for trust.

The AI you and I use today has many layers of third party trust built in: the teams that write the system prompt, that train the models to their weights, the company that operates the hardware.

For privacy and uncensorability, the important distinction is who’s hardware it’s running on. If it’s on your own hardware, no one else can read your conversations, and no one can turn it off.

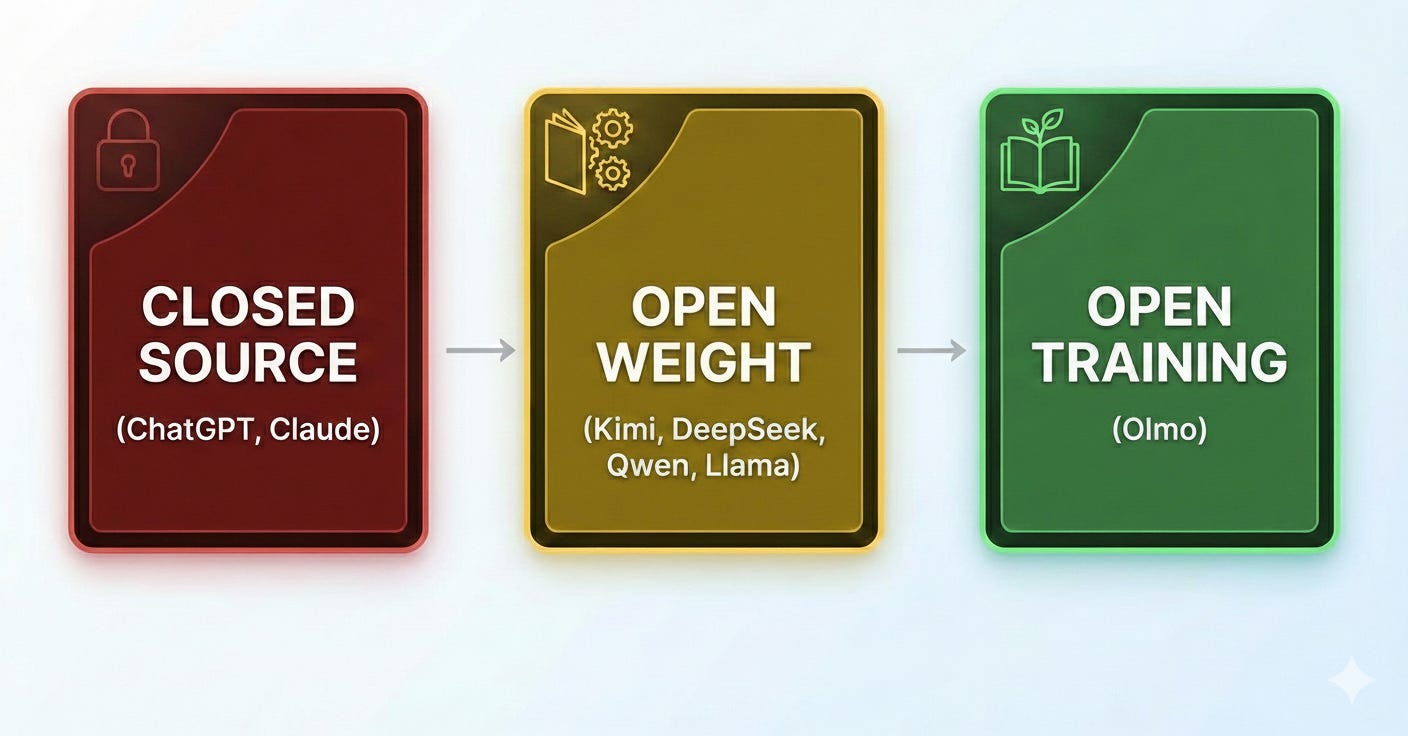

For trusting if the AI’s output is in your best interest, the distinction is on how open is the model? The level of trustability is based on how transparent and independently verifiable the model is.

What is ‘Open Training’ and why does it matter?

An Open Weight model is like a binary blob. You can look at the weights, you can study what happens inside of it when you use it, but you cannot reproduce it. You have no way to verify how it was created, nor do you know what might be hidden inside its billions of parameters. Not unlike how the CIA might put a sleeper agent in an enemy regime, and waiting years for the perfect time to activate them, sleeper agents can be hidden in the weights. The only way to truly understand and trust a model is transparency into how it was trained (the data, objectives, and post‑training reinforcement learning) such that the process is independently reproducible. Olmo is the best example of an Open Training model.

Our path forward

For maximum trust that your Silicon Spirit Animal is on your team, we need to strive for running Open Training models on our own hardware. Everyone needs an AI whose top priority, above all else, is you. There can be no corporate or government interests embedded in the AI. This is the only way to truly trust the AI you use daily to defend you from the onslaught. The Blizzard is coming. Let’s not get caught in it.